Learning without Forgetting

People

Abstract

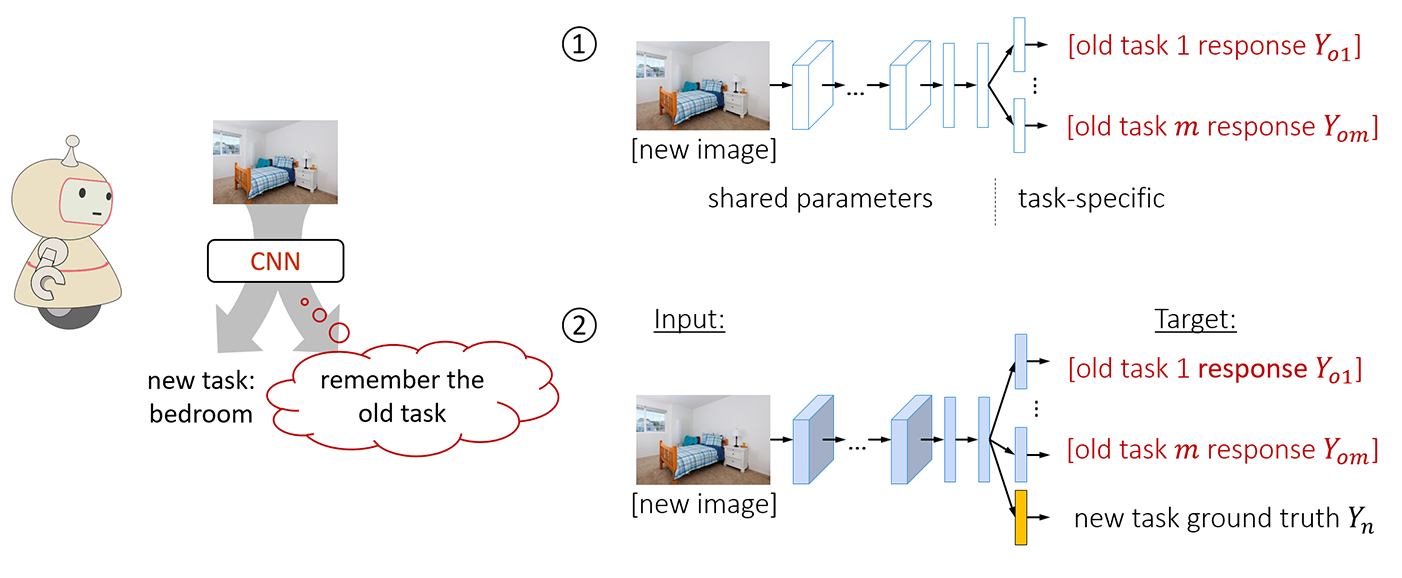

When building a unified vision system or gradually adding new capabilities to a system, the usual assumption is that training data for all tasks is always available. However, as the number of tasks grows, storing and retraining on such data becomes infeasible. A new problem arises where we add new capabilities to a Convolutional Neural Network (CNN), but the training data for its existing capabilities are unavailable. We propose our Learning without Forgetting method, which uses only new task data to train the network while preserving the original capabilities. Our method performs favorably compared to commonly used feature extraction and fine-tuning adaption techniques and performs similarly to multitask learning that uses original task data we assume unavailable. A more surprising observation is that Learning without Forgetting may be able to replace fine-tuning as standard practice for improved new task performance.

Paper and Spotlight Presentation

Li, Zhizhong, and Hoiem, Derek. “Learning without forgetting.” IEEE Transactions on Pattern Analysis and Machine Intelligence (2017).

[PDF]

Li, Zhizhong, and Hoiem, Derek. “Learning without forgetting.” European Conference on Computer Vision. Springer International Publishing, 2016.

[PDF] | [Poster] | [Spotlight] | [Code]

Bibtex

@inproceedings{li2016learning,

title={Learning Without Forgetting},

author={Li, Zhizhong and Hoiem, Derek},

booktitle={European Conference on Computer Vision},

pages={614--629},

year={2016},

organization={Springer}

}

Acknowledgement

This work is supported in part by NSF Awards 14-46765 and 10-53768 and ONR MURI N000014-16-1-2007.